Modern systems rarely fail because they cannot process individual requests. They fail because growth changes the structure of the system faster than teams change the architecture. As traffic increases, services multiply, integrations deepen, and latency stops being a local performance problem. It becomes a coordination problem across producers, consumers, databases, retries, queues, and teams. In that environment, Event-Driven Architecture Patterns matter because they change how systems absorb change, not just how they exchange data.

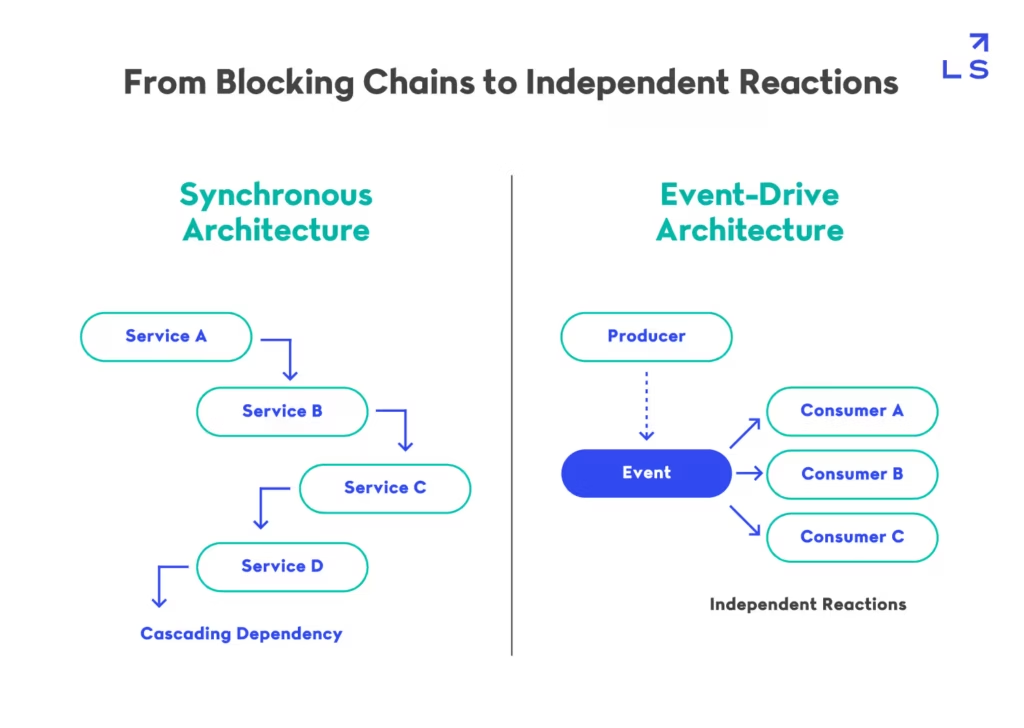

The pressure is most visible in high-throughput environments. A system that handles thousands or millions of state changes per hour cannot depend on tightly sequenced interactions between services without creating contention. Every synchronous dependency adds a timing assumption. Every direct integration adds another coupling point. Over time, what looked like a clean service architecture becomes a fragile chain of blocking calls, duplicated logic, and cascading retries. This is why scaling distributed systems is not only about compute capacity, but also about reducing the cost of coordination.

This contrast between coordinated execution and independent reaction is what separates traditional service architectures from event-driven systems.

This structural shift is the foundation on which event-driven architecture patterns operate at scale.

Many teams respond by adding brokers, streams, or queues, but that usually addresses transport before architecture. The result is an event-based implementation that still behaves like a tightly coupled system. Events are published, but ownership remains unclear. Consumers proliferate, but contracts are unstable. Throughput increases, but so does operational ambiguity. Without a clear model, asynchronous communication simply moves complexity into places that are harder to debug and harder to govern.

That is where Event-Driven Architecture Patterns become useful at a deeper level. They define how state changes propagate, how responsibilities are separated, and how systems evolve without forcing every service into the same execution path. Used well, they let high-throughput systems scale by turning coordination into reaction. Used poorly, they create hidden coupling, schema fragility, and failure chains that only appear under pressure.

This article examines Event-Driven Architecture Patterns from that system perspective. It breaks down the core patterns behind event-driven design, shows how they shape throughput and autonomy, explains the operational tradeoffs they introduce, and clarifies where they fail when architecture is replaced by tooling.

The Structural Role of Event-Driven Architecture Patterns in Distributed Systems

What Event-Driven Architecture Patterns Actually Represent

Event-Driven Architecture Patterns are often described in terms of messaging systems, but that framing misses their actual role. At a system level, they redefine how state changes propagate. Instead of invoking behavior directly, services emit facts about what has already happened, and other parts of the system react to those facts asynchronously.

This seemingly small shift changes the entire execution model. Control flow is no longer explicit or centrally orchestrated. It becomes distributed across time and across services. The benefit is that producers are no longer blocked by downstream dependencies, which is essential in high-throughput environments. The tradeoff is that causality becomes implicit. Understanding “why something happened” requires reconstructing event flows rather than tracing a request stack, which introduces a new class of operational complexity.

Why Traditional Architectures Break at Scale

Traditional service architectures rely on synchronous request chains to coordinate work. This works well when load is predictable and dependencies are stable, but it breaks under high throughput because every interaction introduces a timing dependency. As systems grow, these dependencies form long chains where latency compounds and failures propagate.

The issue is not just performance degradation, but systemic fragility. A slow or failing downstream service can stall upstream systems, creating cascading failures. Retry mechanisms amplify the problem by increasing load during failure conditions. This is a well-documented failure mode in distributed systems, particularly in tightly coupled microservice environments, as explored in Google’s SRE practices.

Event-Driven Architecture Patterns address this by removing the requirement for synchronous coordination. Instead of waiting for a response, systems emit events and continue processing. This decouples execution timelines, allowing systems to absorb spikes without forcing every component to scale simultaneously. However, it also shifts complexity into event ordering, delivery guarantees, and consistency management.

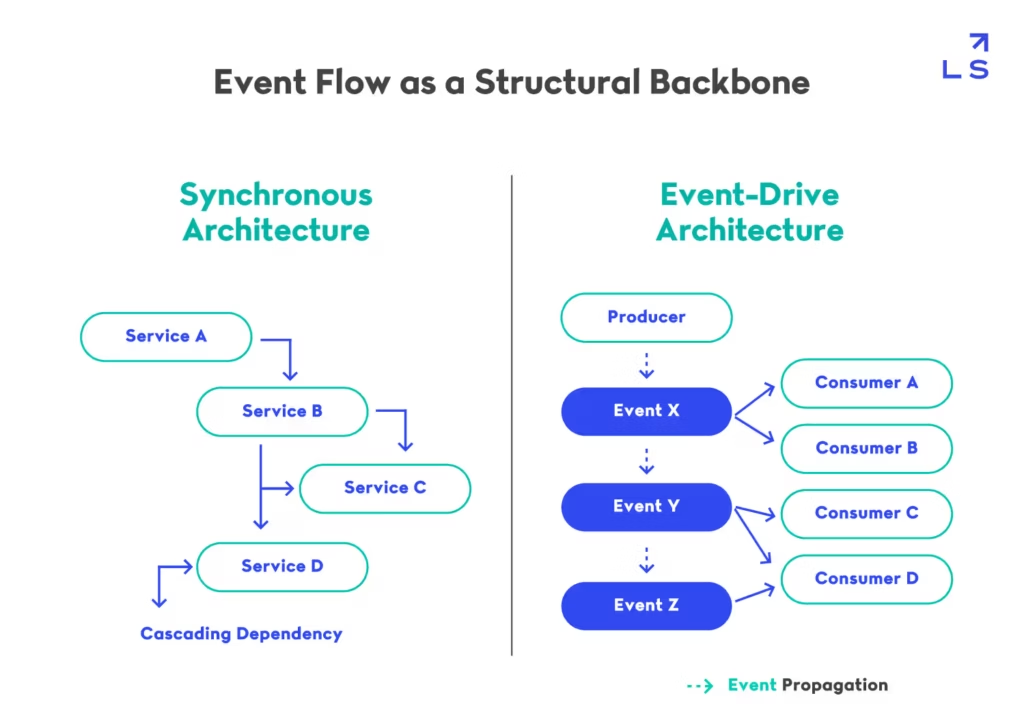

Event Flow as the Primary System Backbone

Once adopted correctly, Event-Driven Architecture Patterns stop being an integration mechanism and become the backbone of the system. Every meaningful state transition is represented as an event, and system behavior emerges from how those events are consumed and transformed.

This creates a system where scaling is no longer tied to specific services but to event throughput. Services can scale independently based on consumption needs, and new functionality can be introduced by adding new consumers rather than modifying existing producers. This is one of the key reasons event-driven systems align well with real-time data architectures, where streams act as continuous sources of truth rather than transient requests, as seen in modern streaming platforms.

The tradeoff is that events become long-lived contracts. Once emitted, they cannot be easily changed without affecting multiple consumers. This forces a level of schema discipline and governance that many teams underestimate. Without it, the same decoupling that enables scale becomes a source of fragmentation and instability.

What changes is not just how services communicate, but how dependencies are expressed across the system.

This shift turns execution from a controlled sequence into a distributed timeline, where system behavior emerges from event relationships rather than predefined call paths.

Decomposing Event-Driven Architecture Patterns into Core Design Patterns

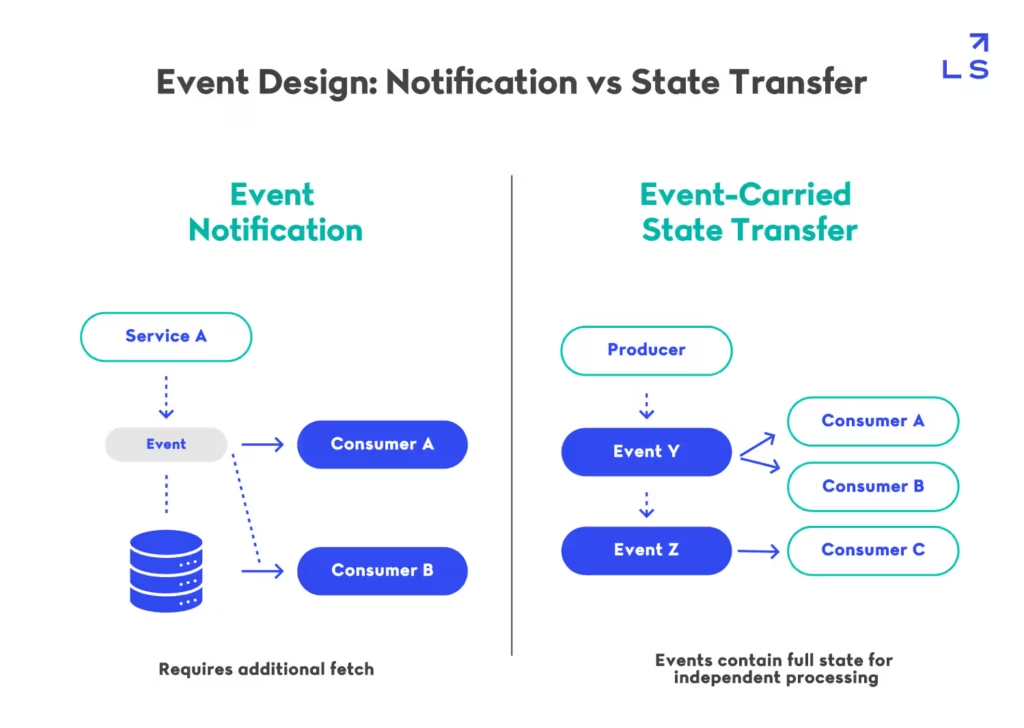

Event Notification vs Event-Carried State Transfer

At the core of event-driven systems lies a deceptively simple question: what exactly does an event carry? The answer defines how coupling re-enters the system. Event-Driven Architecture Patterns are not just about emitting events, but about deciding whether those events represent signals or state.

In the event notification model, events act as triggers. A producer emits a lightweight signal—something happened—but does not include the data required to act on it. Consumers must then fetch additional context from upstream services or databases before continuing processing. This keeps events small and avoids duplication, but it quietly reintroduces synchronous dependencies at consumption time. Under load, this pattern creates a fan-out of read operations, which can become a bottleneck across services.

In contrast, event-carried state transfer embeds all relevant data directly in the event. Consumers can process events independently, without making additional calls. This reduces coordination overhead and allows systems to scale more predictably under high throughput. However, it introduces different tradeoffs: larger payloads, potential data staleness, and significantly stricter schema evolution constraints. Once multiple consumers depend on a shared event structure, even small changes become costly.

This distinction is widely recognized in distributed systems design. As Martin Fowler outlines in his breakdown of event-driven models, notification-based events tend to preserve data ownership at the cost of coordination, while state-carrying events shift complexity into data modeling and versioning. Similarly, structured pattern references highlight how event-carried state transfer reduces downstream dependency chains by eliminating follow-up queries.

The difference between these two approaches is not just data format, but how dependency and coordination propagate through the system.

This decision determines whether your system scales through independence or accumulates hidden synchronization points over time.

Competing Consumers and Horizontal Scalability

Once events are flowing through the system, the next challenge is throughput. Event-Driven Architecture Patterns rely heavily on the competing consumer model to distribute work across multiple processors. Instead of a single consumer handling a stream of events, multiple consumers process events in parallel, allowing the system to scale horizontally without modifying producers.

This model is essential for high-throughput systems, but it introduces important tradeoffs. Parallel consumption weakens ordering guarantees unless events are explicitly partitioned. It also makes failure handling more complex. If a consumer fails mid-processing, the system must retry the event, which can result in duplicate execution.

This is why idempotency becomes a fundamental requirement rather than a best practice. Consumers must be designed so that processing the same event multiple times produces the same outcome. Without this property, retries under failure conditions can introduce subtle inconsistencies that are difficult to trace. Distributed queue systems explicitly acknowledge this behavior. For example, Amazon SQS guarantees at-least-once delivery, meaning duplicate processing is expected and must be handled at the application level.

The implication is that scalability is not just about adding consumers. It requires designing consumers that can tolerate reprocessing, partial failure, and out-of-order execution.

Event Sourcing as a State Reconstruction Model

A more advanced application of Event-Driven Architecture Patterns is event sourcing, where events are not just communication artifacts but the primary source of truth. Instead of storing the current state directly, the system stores the sequence of events that led to that state. State is reconstructed by replaying those events.

This approach enables powerful capabilities. Systems gain full auditability, the ability to reconstruct past states, and deterministic debugging. It also aligns naturally with real-time data systems, where streams represent continuous change rather than static snapshots. This is closely related to how streaming architectures treat logs as immutable sources of truth, a concept explored in Landskill’s breakdown of real-time data pipelines.

However, the tradeoffs are significant. Rebuilding state from long event streams can become computationally expensive, requiring snapshotting strategies. Schema evolution becomes more complex, since historical events must remain interpretable even as the system evolves. Mistakes in event design are effectively permanent, as events cannot be easily rewritten once stored.

As a result, event sourcing is most appropriate in domains where historical accuracy outweighs operational simplicity. Financial systems, auditing platforms, and systems with strict traceability requirements benefit from this model, while simpler domains often do not justify the added complexity.

Operationalizing Event-Driven Architecture Patterns in Production Systems

Designing Event Schemas as Contracts

Once Event-Driven Architecture Patterns are in place, the system stops being defined by APIs and starts being defined by events. At that point, the most critical design artifact is no longer the endpoint—it is the event schema. Events are not messages; they are contracts that multiple independent consumers rely on over time.

Unlike APIs, where changes are negotiated and failures are immediate, event schema issues propagate silently. A producer can change an event structure without breaking immediately, but downstream consumers may fail later, in different contexts, and without clear traceability. This delayed failure mode is one of the most dangerous aspects of event-driven systems.

Designing schemas requires thinking in terms of longevity. Events must remain interpretable long after they are emitted. This means:

- avoiding implicit assumptions about structure

- versioning explicitly rather than modifying in place

- treating fields as append-only whenever possible

This aligns with how event systems evolve in practice, where backward compatibility becomes more important than forward optimization. As outlined in the CloudEvents specification, consistency and structure are critical for interoperability across systems, but governance is what ensures they remain usable over time.

The implication is that schema design is no longer a local decision. It becomes a shared responsibility across teams, requiring coordination mechanisms that did not exist in synchronous systems.

Event Streams as System Boundaries

In traditional architectures, boundaries are defined by APIs. In Event-Driven Architecture Patterns, boundaries are defined by streams. Each event stream represents a domain of truth—a continuous record of state changes that other parts of the system can subscribe to.

This changes how systems are decomposed. Instead of designing around services, systems are designed around flows of information. A stream becomes the interface, and services become implementations that react to it.

This model is particularly visible in real-time data systems, where streams are treated as first-class architectural elements rather than integration mechanisms. This is consistent with how modern data pipelines are structured, where event streams act as the backbone for ingestion, transformation, and delivery, as explored in our analysis of real-time pipelines.

However, defining streams introduces a new tradeoff: granularity. If streams are too coarse, they become bottlenecks. If they are too fine, the system fragments into an unmanageable number of event types. The challenge is not just creating streams, but defining them at the right level of abstraction.

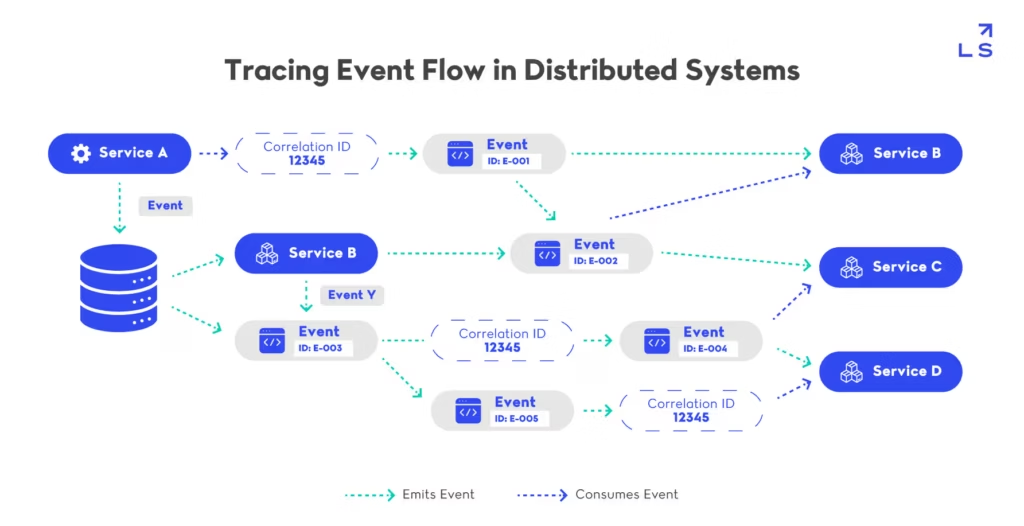

Observability in Asynchronous Systems

As Event-Driven Architecture Patterns remove synchronous coordination, they also remove visibility. In request-response systems, tracing a failure is straightforward: follow the call stack. In event-driven systems, there is no single path to follow—only a series of loosely connected reactions across time.

This makes observability fundamentally different. Instead of tracking requests, systems must reconstruct event flows:

- which event triggered which reaction

- how events propagate across services

- where delays or failures occur in the chain

This is where distributed tracing becomes essential, but it must be adapted to asynchronous systems. Tools like OpenTelemetry provide mechanisms to correlate events across services, but they require consistent instrumentation and context propagation to be effective.

The challenge is not tooling—it is discipline. Without consistent event metadata (correlation IDs, timestamps, causation links), even the best observability systems cannot reconstruct system behavior accurately.

This complexity becomes even more visible in frontend-heavy systems, where backend event flows must eventually surface as user-visible changes. Coordinating performance across these layers introduces additional constraints, particularly around latency and synchronization, which ties into broader frontend performance.

In practice, understanding behavior means reconstructing causality across events rather than following a single execution path.

This is why observability in event-driven systems depends less on tracing calls and more on designing events with enough context to be traceable across time.

Scaling Event-Driven Architecture Patterns Across Teams and Systems

Organizational Decoupling Through Event Flows

Event-Driven Architecture Patterns do not just decouple services—they decouple teams. When systems communicate through events rather than direct calls, ownership boundaries become clearer. Teams can publish events without coordinating downstream consumers, and consumers can evolve independently as long as they respect event contracts.

This model changes how systems grow. Instead of requiring synchronized deployments across multiple services, new functionality can be introduced by adding new consumers. Existing producers remain unchanged, which reduces the coordination overhead that typically slows down large engineering organizations.

However, this autonomy introduces a new problem: uncontrolled proliferation. Without governance, teams begin emitting overlapping or redundant events, creating fragmentation. Different teams may interpret similar domain concepts differently, leading to semantic drift across the system.

This is why event-driven systems require explicit ownership models. Events must belong to a domain, and that domain must be clearly defined. Without this, the same decoupling that enables scale becomes a source of inconsistency.

Throughput Scaling Without Central Coordination

At a system level, Event-Driven Architecture Patterns enable throughput scaling by removing the need for synchronized execution. Producers emit events independently, and consumers scale horizontally based on demand. This allows systems to handle spikes without requiring every component to scale simultaneously.

But removing coordination does not remove constraints—it redistributes them. Systems must now handle:

- eventual consistency across services

- out-of-order event delivery

- partial failure across distributed consumers

This is where architectural decisions begin to compound. For example, a system that relies heavily on event-carried state transfer may scale well under load but struggle with schema evolution. A system that uses notification-based events may preserve data ownership but introduce hidden read amplification.

These tradeoffs become more visible in real-time systems, where throughput and latency are tightly coupled. This is consistent with how streaming architectures differ from batch systems, where continuous processing introduces different scaling constraints.

The key insight is that scaling is not just about processing more events—it is about maintaining system coherence as those events propagate.

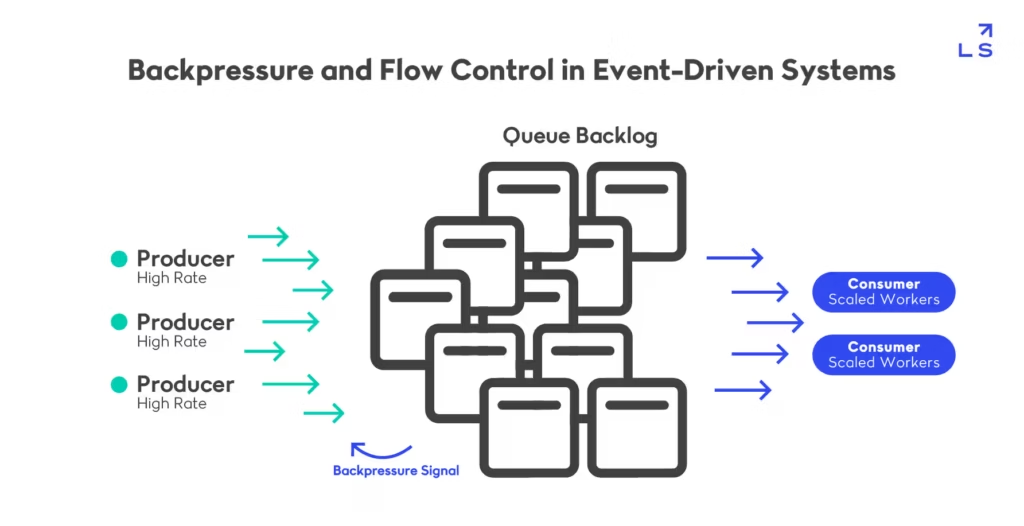

Backpressure and Flow Control as First-Class Concerns

As systems scale, the gap between producers and consumers becomes unavoidable. Event-Driven Architecture Patterns must account for this imbalance explicitly through backpressure and flow control mechanisms.

Without these controls:

- queues grow indefinitely

- latency increases unpredictably

- systems degrade under sustained load

Backpressure is not just an infrastructure concern—it is an architectural one. It defines how systems behave under stress. Some systems choose to buffer, accepting increased latency. Others drop or throttle events, prioritizing system stability over completeness.

Modern streaming systems address this through partitioning and consumer groups, allowing load to be distributed while maintaining some ordering guarantees. Kafka’s design explicitly incorporates these principles, treating partitions as units of parallelism and control.

However, these mechanisms introduce their own complexity. Partitioning affects data locality and ordering. Consumer groups require coordination. Flow control decisions can impact user-visible behavior.

This means that backpressure is not something you “add later.” It must be designed into the system from the beginning. At scale, systems are defined by the imbalance between event production and consumption.

This imbalance forces systems to either absorb pressure through buffering or propagate it upstream through backpressure mechanisms.

Where Event-Driven Architecture Patterns Fail in Real Systems

Hidden Coupling Through Event Design

Event-Driven Architecture Patterns are often adopted to eliminate tight coupling between services. However, in practice, they frequently reintroduce coupling in a less visible form—through event design itself. While services no longer call each other directly, they become dependent on shared event structures and semantics.

This form of coupling is harder to detect because it does not manifest as runtime failures. Instead, it appears during evolution. When a producer modifies an event schema, multiple downstream consumers may break silently or behave incorrectly. The absence of explicit contracts makes these dependencies difficult to track, especially as the number of consumers grows.

Over time, this leads to a paradox: systems appear loosely coupled at the infrastructure level but are tightly coupled at the data level. This is particularly problematic in large systems where multiple teams depend on the same event streams. Without governance, events become shared APIs without versioning discipline.

This is why event design must be treated with the same rigor as API design. Events introduce implicit contracts that evolve over time, and without careful versioning and ownership, those contracts become a source of hidden coupling across the system.

Event Explosion and Loss of Semantic Clarity

As systems scale, the number of events tends to grow rapidly. Each new feature introduces new event types, and different teams may define similar events with slight variations. Event-Driven Architecture Patterns can unintentionally lead to an explosion of poorly defined events, making the system harder to understand over time.

This problem is not just about quantity—it is about meaning. When events are not clearly defined within domain boundaries, their semantics drift. For example, multiple “UserUpdated” events may exist across different services, each representing slightly different concepts. Consumers must then interpret these events based on context, increasing cognitive load and the risk of incorrect assumptions.

This fragmentation reduces one of the core benefits of event-driven systems: clarity of system behavior. Instead of providing a clean representation of state changes, the event landscape becomes noisy and inconsistent.

Debugging and Temporal Complexity

One of the most underestimated challenges in Event-Driven Architecture Patterns is debugging. In synchronous systems, failures can often be traced through a call stack. In event-driven systems, there is no single execution path—only a sequence of events occurring across time and services.

This introduces temporal complexity. A failure may not occur immediately after an event is produced but may surface minutes or hours later in a downstream consumer. Reconstructing the sequence of events that led to the failure requires correlating data across multiple services, logs, and time windows.

The difficulty increases with retries and eventual consistency. Events may be processed multiple times, out of order, or delayed. This makes it harder to determine whether a system is behaving correctly or simply converging toward consistency over time.

Distributed tracing systems attempt to address this challenge, but they rely heavily on consistent instrumentation and context propagation. Without these, tracing becomes incomplete or misleading.

The key insight is that debugging in event-driven systems is not just harder—it is fundamentally different. It requires thinking in terms of timelines rather than call stacks.

Positioning Event-Driven Architecture Patterns in Modern System Design

When Event-Driven Systems Create Real Leverage

Event-Driven Architecture Patterns are often positioned as a default for modern distributed systems, but their value is highly contextual. They create real leverage when systems are defined by asynchronous change, not synchronous coordination. In domains where state transitions happen continuously—user activity streams, financial transactions, IoT data—event-driven models align naturally with how the system behaves.

In these scenarios, events are not an abstraction layer; they are the system’s native language. The architecture reflects reality rather than imposing structure on it. This alignment reduces impedance between business logic and system design, allowing teams to model change directly instead of translating it into request-response interactions.

However, this advantage disappears in systems that are inherently transactional or tightly coupled. In those cases, introducing Event-Driven Architecture Patterns adds indirection without reducing complexity. The result is often slower development, harder debugging, and unnecessary infrastructure overhead.

When Event-Driven Systems Amplify Complexity

While Event-Driven Architecture Patterns enable decoupling and scalability, they also amplify complexity when applied without clear boundaries. The same mechanisms that allow independent evolution—loosely coupled producers and consumers—also make it harder to enforce consistency and maintain shared understanding across the system.

This is particularly visible as systems grow. New services subscribe to existing events, new event types are introduced, and cross-domain dependencies accumulate. Over time, the system evolves into a network of implicit relationships that are difficult to reason about holistically.

The critical failure point is not technical—it is semantic. When domains are not clearly defined, events begin to overlap in meaning. Multiple teams may describe similar concepts differently, and consumers must interpret intent rather than rely on stable definitions.

This is where architectural discipline becomes essential. Event-driven systems require:

- clear domain ownership

- explicit event naming conventions

- governance over schema evolution

The complexity introduced by poorly defined boundaries and uncontrolled event growth is explored in more detail in this breakdown of real-world event-driven systems.

Without these, complexity grows faster than value.

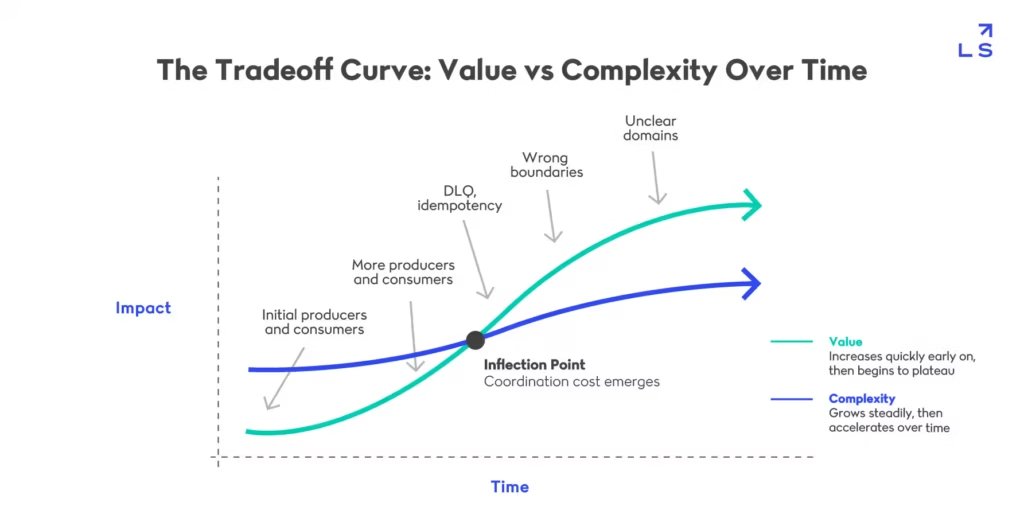

The Tradeoff Curve: Value vs Complexity Over Time

Event-Driven Architecture Patterns do not introduce linear complexity. They introduce compounding complexity. Early in a system’s lifecycle, the benefits are clear: decoupling, scalability, and flexibility. Teams can move faster by adding consumers instead of modifying existing services.

But as the system evolves, new constraints emerge:

- event proliferation

- schema management overhead

- debugging complexity

- cross-team coordination challenges

At a certain point, the cost of managing these constraints begins to grow faster than the value gained from decoupling. This is not a failure of the architecture—it is a natural consequence of distributed systems scaling across both technology and organization.

The key insight is that Event-Driven Architecture Patterns shift complexity rather than eliminate it. They move it:

- from synchronous coordination → asynchronous reasoning

- from API contracts → event semantics

- from runtime coupling → design-time discipline

As systems evolve, the relationship between value and complexity is not linear—it accelerates as event-driven systems scale.

Recognizing where your system sits on this curve determines whether event-driven architecture remains an advantage or becomes a liability.

Designing for Intent, Not Architecture

The final positioning of Event-Driven Architecture Patterns is not about choosing a paradigm—it is about aligning architecture with intent. Systems should not adopt event-driven models because they are modern, but because they reflect how the system needs to behave.

This requires asking a different set of questions:

- Is the system driven by continuous change or discrete transactions?

- Do components need to react independently or coordinate synchronously?

- Is scalability driven by throughput or consistency requirements?

When the answers point toward asynchronous, distributed change, event-driven architecture becomes a natural fit. When they do not, forcing it introduces unnecessary complexity.

This is the difference between architecture as a pattern and architecture as a decision. Event-Driven Architecture Patterns are powerful, but only when applied where they align with the system’s fundamental characteristics.